Google responds to study suggesting millions of its AI Overviews are actually factually wrong

Google has responded after a recent report found that its AI Overviews are getting things wrong tens of millions of times per day.

Google search has become the go-to when you need some information fast, like when your pal is being loud and wrong about what year Rocky came out, or when you really want to make banana bread but you don’t have a clue where to start.

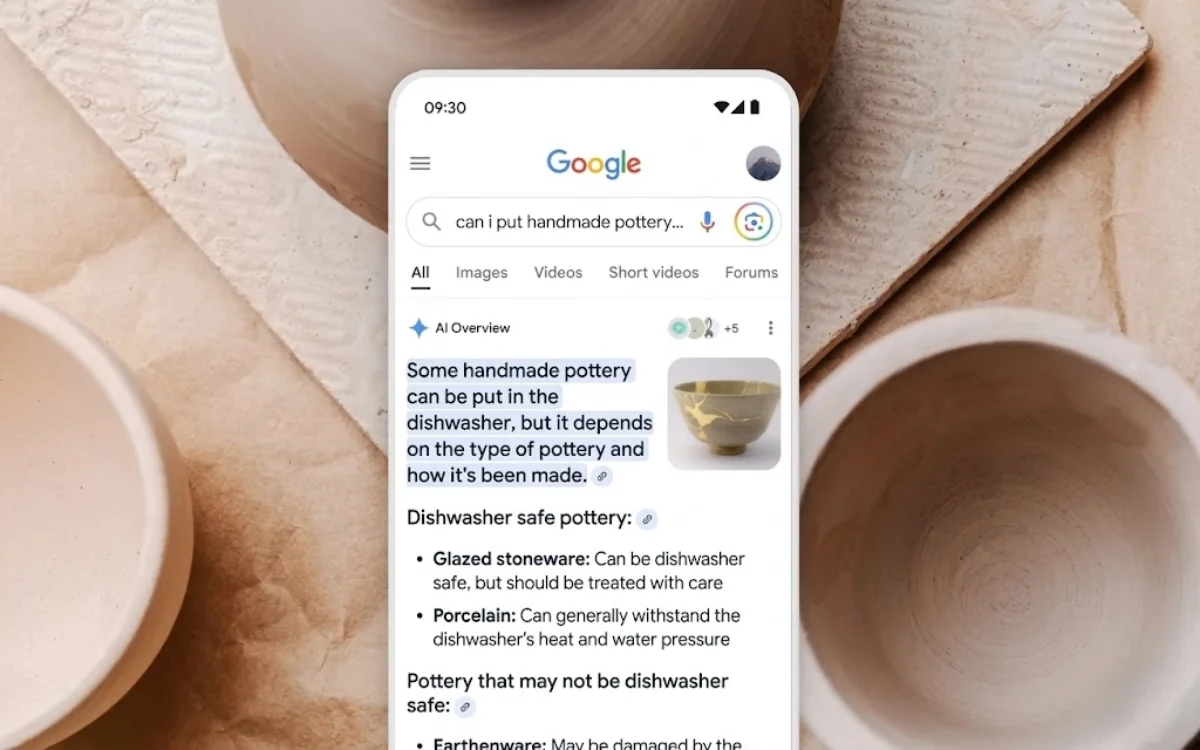

In more recent times, Google search has implemented an AI Overview feature, which can give you the answer you need without having to click through to another page.

However, a new report from The New York Times has found that this AI Overview often get things wrong.

A new report found that AI Overviews aren’t as accurate as you might have thought

Google launched the AI Overview feature in 2024, and it got off to a bit of a rocky start with some users noticing it would bring up inaccurate and occasionally bizarre responses, like one man being told to put glue on his pizza to help the toppings stick. (Please don’t try that at home.)

But as the technology has progressed, Google’s AI Overview has become much better at giving helpful and accurate information.

However, a report from The New York Times has suggested there’s still a way to go.

The publication worked with startup Oumi to analyze AI Overview using the SimpleQA evaluation, which was created by OpenAI is and is used to rank the factual accuracy of generative AI models.

When Oumi started the test, AI Overview was using Gemini 2.5, and the results showed that it had an 85 percent accuracy rate.

It redid the test when the Gemini 3 update was released and found that AI Overviews now responded with 91 accuracy.

Now, while a 91 percent success rate might sound pretty solid, it means that just under one out of every 10 requests is given the wrong answer.

And when something is handling as many requests as Google search, that adds up to tens of millions of wrong answers a day.

The report shared several examples of AI Overviews that were incorrect, including asking when Bob Marley’s home was converted into a museum, which it stated was 1987, when the correct answer is 1986.

Google has issued a response to the study

Google responded to the report, telling The New York Times that the study wasn’t an accurate reflection of how people search online.

“This study has serious holes,” spokesperson Ned Adriance told the publication.

“It doesn’t reflect what people are actually searching on Google.”

It’s also important to note that Google does warn users to check the accuracy of its responses.

“AI can make mistakes, so double-check responses,” a note explains along the bottom of the AI Overview.

Which is something to keep in mind next time you’re trying to settle an argument with the help of Google search.

DISCOVER SBX CARS: The global premium car auction platform powered by Supercar Blondie

Follow topics and authors from this story to see more like this in your personalised homepage feed and to receive email updates.